Create a new notebook by clicking on New -> Python 3. Install Spark 2.2.1 in Windows Raymond visibility 3,090 accesstime 5 years ago language English This page summarizes the steps to install Spark 2.2.1 in your Windows environment. This should start a new Jupyter Notebook in your web browser. Remember to restart your terminal and launch PySpark again: pyspark

#Download spark tgz code#

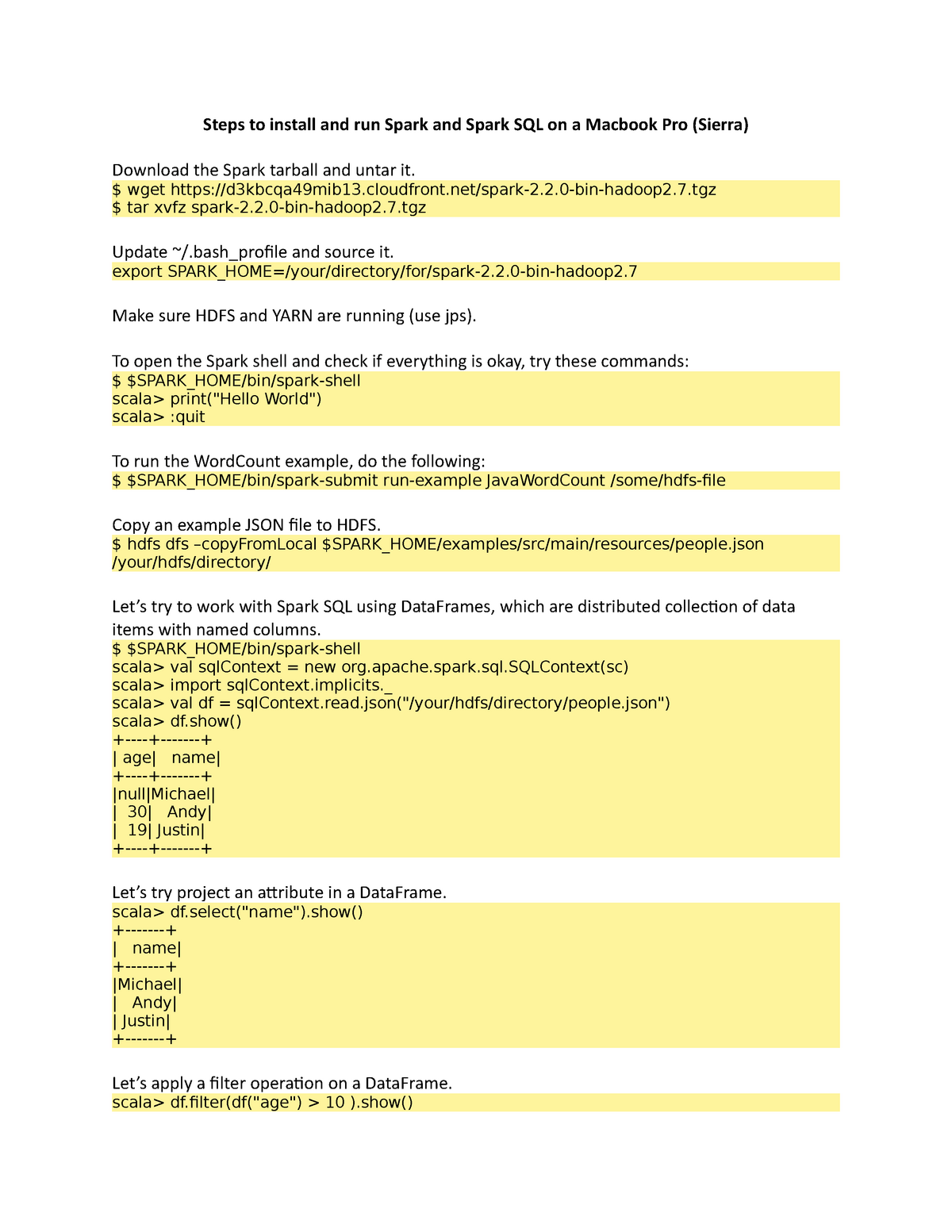

Jupyter Notebook is a popular web application that allows you to create documents containing live code and visualizations of the running results.Īnd set up the below environment variables in your ~/.bashrc (or ~/.zshrc): export PYSPARK_DRIVER_PYTHON=jupyterĮxport PYSPARK_DRIVER_PYTHON_OPTS='notebook' Rdd = sc.parallelize(content.split(' '))\ Setup SPARKHOME environment variables and also add the bin subfolder into PATH variable. The Spark binaries are unzipped to folder /hadoop/spark-3.0.0. content = "How happy I am that I am gone" mkdir /hadoop/spark-3.0.0 tar -xvzf spark-3.0.0-bin-without-hadoop.tgz -C /hadoop/spark-3.0.0 -strip 1. This is a mini script that counts the words in a string. If everything goes smoothly, you should see something like this: Welcome to Restart your terminal and you should be able to start PySpark now: pyspark

Then you need to tell your system where to find Spark by editing ~/.bashrc (or ~/.zshrc): export SPARK_HOME=/opt/spark In this short tutorial we will see what are the step to install Apache Spark on. This way, you will be able to download and use different versions of Spark. Then create a symbolic link: ln -s /opt/spark-2.4.5 /opt/spark Unzip and move it to your favorite place: tar -xzf spark-2.4.5-bin-hadoop2.7.tgz

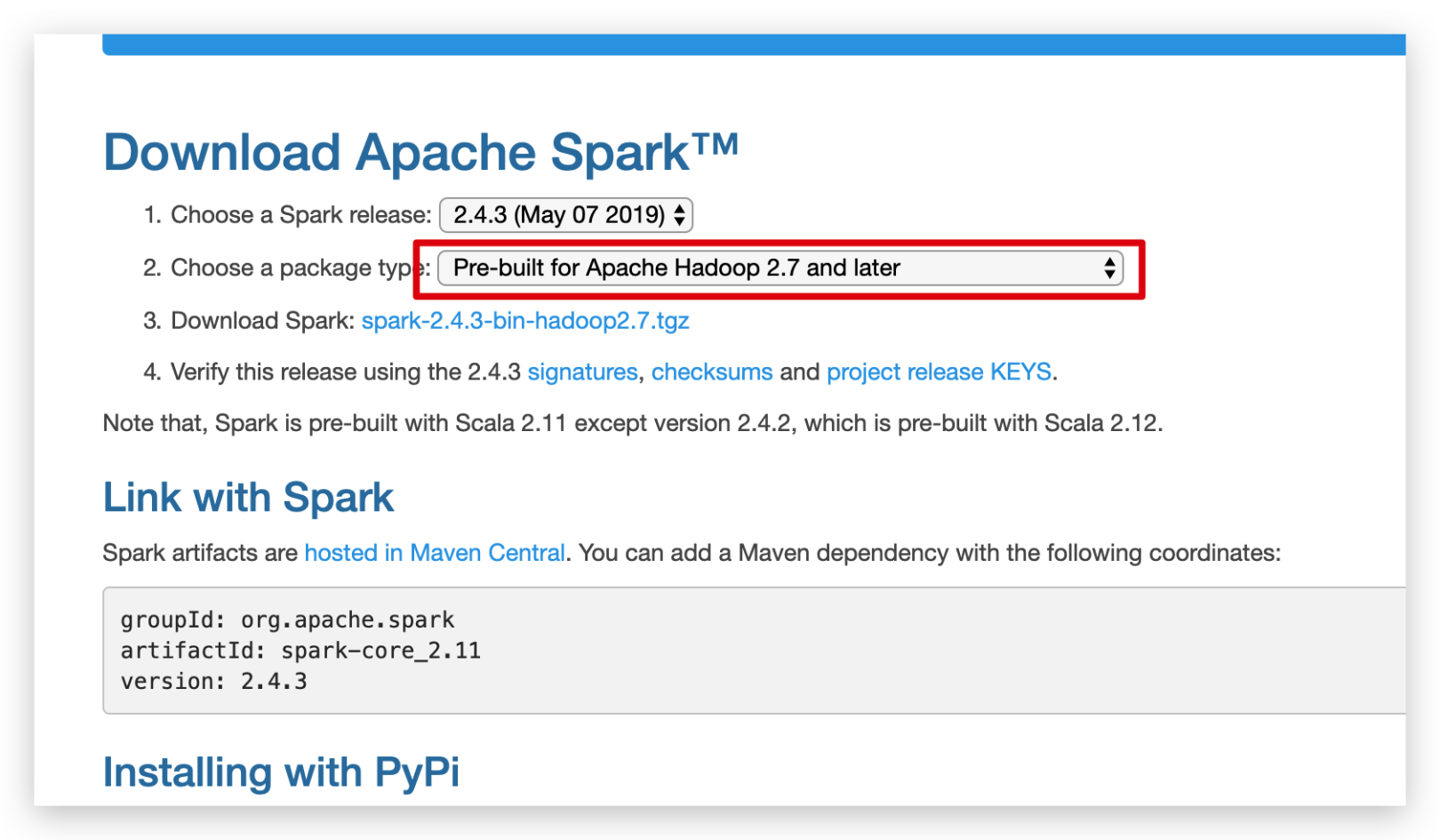

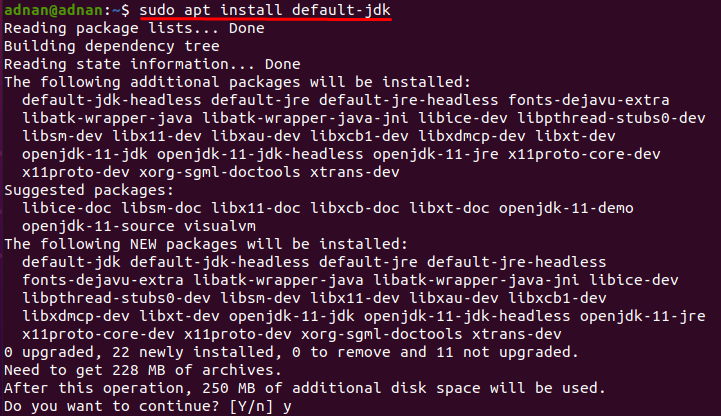

We have curated a list of high level changes here, grouped by major modules. You can consult JIRA for the detailed changes. My Spark folder looks like: If you have something that looks like this, then you should be in good shape. To download Apache Spark 2.4.0, visit the downloads page. To see a detailed list of changes for each version of Scala please refer to the changelog. Other Releases You can find the links to prior versions or the latest development version below. Again, take note of where you extracted the Spark folder. You can find the installer download links for other operating systems, as well as documentation and source code archives for Scala 2.11.8 below. Once it has downloaded, use 7-zip to extract the folder to a known location, c:spark-2.4.3-bin-hadoop2.7, for example. A pre-built package for Apache Hadoop and download directly. Today, that is spark-2.4.3-bin-hadoop2.7.tgz.Please make sure you have Python 3 installed. Please make sure you have Java 8 or above installed. This article will help you install Spark and set up Jupyter Notebooks in your Linux/Mac environment. Apache Spark is a unified analytics engine for large-scale data processing.